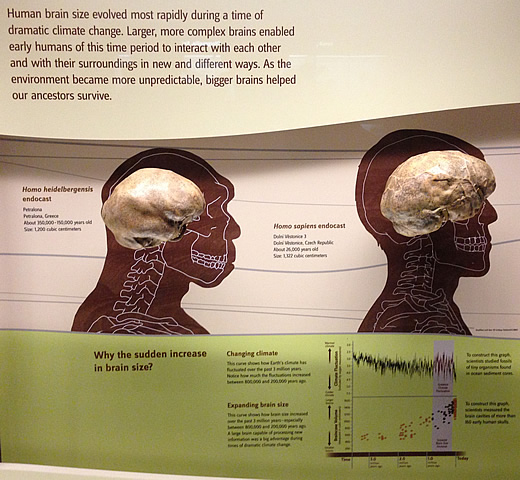

The picture above was taken at one of the best exhibits I’ve ever seen in any museum: the Hall of Human Origins at the Smithsonian National Museum of Natural History. The chart in the lower right-hand corner shows the correlation between brain size in humans and drastic changes in climate with an emphasis on the period between 800,000 and 200,000 years ago. (A nicer version of this chart is available on the exhibit’s website.)

It makes perfect sense that greater intelligence (as evidenced by larger brains) proved advantageous during times of unpredictable weather since the more humans were able to plan ahead, communicate, and work collaboratively, the more likely they were to survive. In fact, I’ve even read that the cranial capacity of fossilized skulls gets larger the further away from the equator they occurred, suggesting a correlation between larger brains and harsher weather. In other words, in terms of natural selection, everything here appears to be in perfect working order.

But while there are no surprises in the relationship between brain size and climate change, there certainly is plenty of irony. The eventual result of all of that hard-fought intelligence were both the agricultural and industrial revolutions — precisely the technological advances that are most closely associated with modern climate change. Therefore, one could theorize that surviving rapid climate change bestowed upon humanity just enough intelligence to create even more rapid and dangerous climate change. One might even go so far as to say that the human brain is attempting to self-perpetuate continued growth.

I’ve read conflicting predictions of how this latest wave of climate change will ultimately affect brains size. Since equatorial temperatures will continue to expand latitudinally, it’s possible that the human brain could suddenly stop growing; on the other hand, due to all the challenges humanity faces as a result of rapid climate change, the size of our brains could continue to grow — perhaps at an even faster pace. Personally, I’m hoping for a future where we learn to use technology, intelligence, and even a little empathy to finally take control of our own evolutionary paths. Although it’s a little late for me to be genetically engineered, I wouldn’t mind a few multi-core petaflop processors embedded in my brain and at least one robotic arm.

Astronomers Avi Loeb and Edwin Turner recently

Astronomers Avi Loeb and Edwin Turner recently